There's a risk in your change programme that rarely makes it onto the risk register. And if you've been watching the AI conversation lately...I can guarantee you've seen it! It's not a toxic leader, hostile stakeholder or external 'threat'.... It's someone capable and well-intentioned...but whose confidence has quietly outrun their calibration. It has a name. David Dunning and Justin Kruger documented it in 1999. Most importantly: before you look around the table and start spotting it in others, there's a quick game worth playing first.

Read MoreMost leadership teams can’t answer this question…without looking at a slide. Can your leadership team name your 3-6 distinctive capabilities? Then: does your AI investment map to those - or does it map to a desire to look like you’re keeping up? The Coherence Premium isn’t just a strategy concept. It’s the difference between AI that builds momentum and AI that quietly drains it.

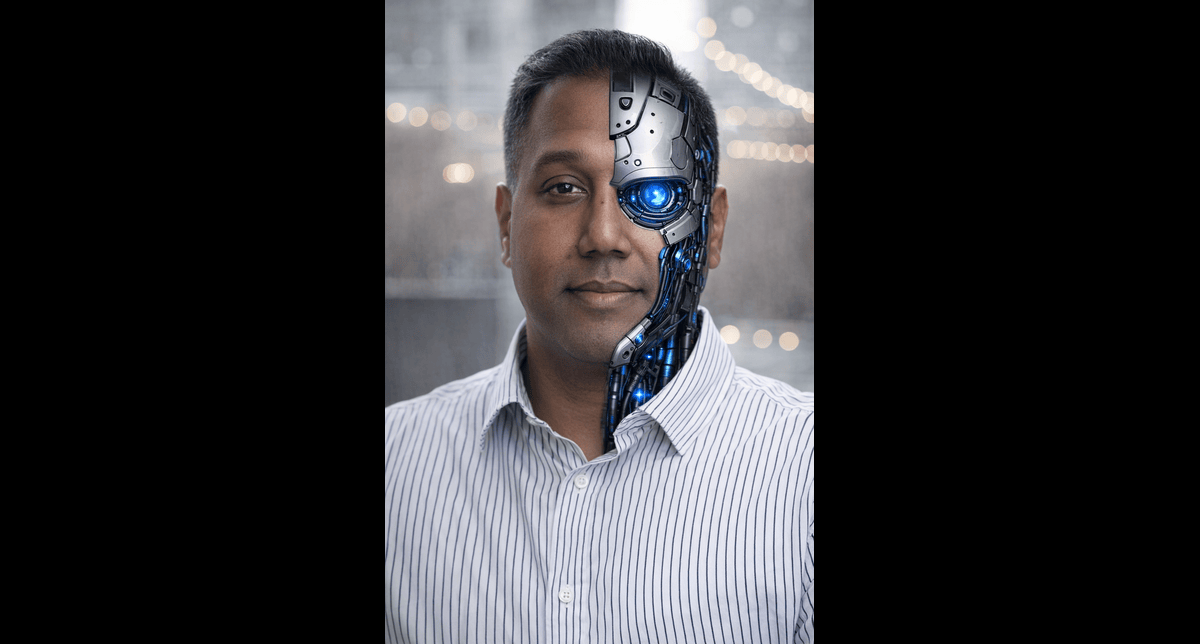

Read MoreI got scared that AI would take my job.

Which is mildly ironic...given I've spent 20 years helping leaders navigate exactly this kind of moment.

Even more ironic? The tool that scared me, has no emotions whatsoever.

There's something worth sitting with in that juxtaposition.

Then I got sent (by actual humans) two pieces of research that completely reframed what I thought I knew about the AI transition.

The headline finding: we've swapped the motor. We have not redesigned the factory. AI is wholly dependent on organisational change.

There's an essential leadership concept behind why so many hyped AI projects are underperforming.

Your people don’t trust each other enough to share their data...or to do the work to create meaningful, high-quality data.

No trust = no data.

No data = no AI impact.

Let's get back to the bottom of the pyramid; here's Patrick Lencioni's perennial Five Dysfunctions of Team through an AI implementation lens